DISCLAIMER: This article was migrated from the legacy personal technical blog originally hosted here, and thus may contain formatting and content differences compared to the original post. Additionally, it likely contains technical inaccuracies, opinions that the author may no longer align with, and most certainly poor use of English. This article remains public for those who may find it useful despite its flaws.

With the introduction of Shader Model 5.0 hardware and the API support provided by OpenGL 4.0 made GPU based geometry tessellation a first class citizen in the latest graphics applications. While the official support from all the commodity graphics card vendors and the relevant APIs are quite recent news, little to no people know that hardware tessellation has a long history in the world of consumer graphics cards. In this article I would like to present a brief introduction to tessellation and discuss about its evolution that resulted in what we can see in the latest technology demos and game titles.

Introduction

Geometry tessellation is a graphics technique used to amplify the geometric details of a particular mesh. This is done by subdividing the polygons of the mesh into smaller polygons and, if needed, alter the position of the generated vertices to better fit the theoretical shape of the object that is being modeled by the mesh.

Tessellation was a commonly used technique in offline rendering softwares to add a greater level of realism to computer modeled objects as well as it has been often used as a preprocessing technique for real-time graphics applications. However, due to the increased number of geometry data, the usage of tessellated geometry was very limited in the early eras of real-time computer graphics as it needed huge amount of disk/memory storage as well as much higher processing capabilities in order to achieve interactive frame rates.

The key problem of an offline tessellation preprocessing and using a detailed mesh in real-time graphics is that even the latest generation of GPUs lack of the needed memory size and bandwidth to make this a practical approach and we are not even talking about additional costs that are involved by having a much larger dataset that has to be run through possibly complex vertex processing steps like skeletal animation. Having the tessellation technology integrated into the GPU makes it possible to overcome most of these restrictions.

While hardware tessellation as a generic feature made its way to the relevant APIs only in the recent past, there were a few earlier efforts already made by various hardware generations in order to make this technology popular. In order to present the evolution of hardware tessellation I will go through the relevant technologies in a chronological order to better see the reasons why this great feature didn’t make its way to the core API specifications until now.

TruForm

The first consumer graphics card featuring hardware tessellation that made its way to the market was the ATI Radeon 8500 in 2001. The tessellation feature of the GPU got known as TruForm and soon became available in OpenGL via the extension GL_ATI_pn_triangles but the functionality never made its way into core due to the lack of any similar hardware support from other graphics card vendors.

The tessellation hardware present in the Radeon 8500 was a completely fixed function component that had predefined tessellation evaluation modes even though the GPU had already support for both programmable vertex and fragment processing. It is also interesting that the tessellator operated on vertices emitted by the vertex shader if one was present.

The tessellator itself has one configurable parameter: the tessellation level. This controls the amount of cuts that are performed over each edge of the input primitive which in case of TruForm must be always a triangle (whether it comes from a list, strip of fan). As the support for the extension has been removed a few years ago, unfortunately I cannot tell the upper limit for the tessellation level supported by TruForm but I remember as it was about 15 or so (I hope somebody can confirm it or correct me).

Beside that, the tessellation evaluator has a few other configurable parameters that control the way how vertex positions and normals are evaluated after the geometry amplification. For normals there is a linear and a quadratic interpolation mode, for vertex positions linear and cubic interpolation is available. All the rest of the vertex attributes are linearly interpolated over the tessellated geometry.

The good thing in TruForm is that it can be very simply added to an existing rendering engine implementation just by adding a few API calls but taking into account that the functionality of the hardware component can be only managed using rendering state limits tessellation parameter control to a per object basis and also means that changing the tessellation configuration breaks batches as well.

Another advantage of TruForm is that it works on transformed vertices which means that we can safely use tessellation with complex vertex processing techniques like skeletal animation without worrying about huge transformation costs that in case of a post-tessellation vertex shader would be inherent.

Another issue that is a must to be mentioned when one talks about hardware tessellation is crack-free rendering. As usually tessellation works on individual primitives there is often no guarantee that no cracks will appear between adjacent polygons after tessellation is applied. In case of TruForm this is relevant only if cubic position interpolation is used as only that mode alters the vertex positions themselves. In case this vertex position evaluation mode is used the artists must ensure that vertices on common edges have the same normal. This is quite a limiting factor in certain situations but should not cause any problems in the most of the common use cases.

A huge deficit of the original N-Patch implementation of ATI is that the tessellation evaluation is not programmable and has little to no options to control how the resulting vertices will look like. This meant that novel graphics techniques like displacement mapping were not possible to be implemented with it. While this is a quite severe limiting factor, TruForm was still a great feature for increasing the detail of already existing and upcoming game titles.

Unfortunately TruForm wasn’t that welcome by the developer community due to the additional burden brought to artists and the lack of flexibility from programming side. Still, I think the most important factor was the lack of wide adoption of the feature from other relevant vendors.

Beyond TruForm

After the original appearance of hardware tessellation there were several further efforts to make geometry tessellation a popular feature in real-time graphics. Besides ATI, Matrox also released GPUs with N-Patch support and ATI has also improved his TruForm feature with the appearance of the Radeon 9700. These cards were able to do two very important things that the original TruForm was lacking.

First, they provided means to do the tessellation evaluation based on a texture which enabled the implementation of displacement mapping. Second, and in my opinion even more important, that they supported adaptive tessellation which means that the tessellation factor was calculated dynamically based on the distance from the camera. Finally, the new tessellation implementations allowed also continuous tessellation mode thus allowing seamless transition between various tessellation levels.

Unfortunately I don’t know any OpenGL extensions that exposed this functionality and that means also that I’ve never had a closer look at them so if you are interested in these technologies you’ll have to do a little bit of search around the internet.

Geometry Shaders

After the failure of the early attempts to introduce hardware tessellation to the general public, the appearance of Shader Model 4.0 capable graphics cards made many developers think that we’re gonna see hardware tessellation in the form of geometry shaders. While actually some cards really had a new generation of tessellation hardware on the market this time that had nothing to do with geometry shaders, but I will talk about it later…

Many developers have incorrectly seen a practical tessellator in the form of the geometry shader at its appearance. While it is true that a geometry shader can in fact be used to perform geometry amplification, several hardware limitations in fact make this approach rather inefficient in practice. Anyway, first I will talk about how geometry shaders can be used for tessellation and after that I will tell why not to do so.

The geometry shader is a new programmable stage introduced by Shader Model 4.0 that operates on whole primitives after vertex processing and before primitive assembly. They have a fixed input and output primitive type that doesn’t have to match. This means it is possible to emit triangles even though the input primitives were points.

The greatest feature of geometry shaders is that they can output a dynamically adjustable amount of geometric primitives based on the input primitive including even the possibility to discard the current primitive. The first allows us to do a certain amount of geometry amplification with them and evaluate the output primitives as we wish (of course, within the boundaries of the possibilities of a shader). The only limiting factor is the upper limit of the output buffer available on the target hardware. This, in fact is a rather limiting factor, especially in case of large number of vertex attributes.

In this use case scenario, the geometry shader acts like both the tessellator and the evaluator as it is used for both the execution of the geometry amplification as well as the interpolation of the vertex attributes. This provides almost complete flexibility over how we would like to implement our tessellation algorithm. We can choose the tessellation factor as part of the programmable stage so adaptive tessellation is no problem. Also we can easily add displacement mapping or any other technique to control how our newly generated primitives will be positioned and oriented.

Now, as we have seen how easy and flexible is a geometry shader based tessellation implementation, let’s see the dark side of it…

First of all, as the geometry shader is a revolutionary feature compared to earlier programmable GPU capabilities it suffer from the fact that it doesn’t really fit into the existing architecture. Previously, every fixed-function and programmable hardware component on the GPU had a fixed amount of input and output data making it possible to create a kind of a synchronous pipeline architecture. This way it was rather easy for the execution dispatcher to share workload over the computing units and keep them all the time busy (at least most of the time).

The programmable amount of output data made possible by geometry shaders somewhat breaks this synchronous architecture. This means that a more dynamic dispatching mechanism is required to control the consumption of the data output by it. In order to achieve this there are two important issues:

First, there should be a temporary buffer that will hold the output as we cannot guarantee that the outputs can be immediately fed to the subsequent stages of the rendering pipeline. This has been implemented by memory buffers and/or caches by the various vendors.

Second, due to the geometry shader can be executed in parallel (at least in theory) and various instances of the geometry shader can output various amount of primitives, there can be problems with the synchronization of data emissions and the order in what output primitives will take place in the output buffer.

AMD solved these problems by introducing a new cache that is meant to handle the special nature of primitive emissions executed by the geometry shader. Unfortunately NVIDIA’s implementation is much more limited and, as far as I can tell, it may result in that geometry shader instances are executed only on one or just a few computing units which can severely degrade performance in case of tessellation. This is the reasoning behind why we have to specify in GLSL the maximum number of primitives that our geometry shader can output. This is used as an input for NVIDIA drivers to plan the necessary storage strategy for the geometry shader and in fact they have no any effect in case of AMD GPUs. So if you want your geometry shader to run faster on AMD GPUs, just set this maximum limit as high as possible ;)

There is another problem with a geometry shader based tessellator implementation: the geometry amplification is done iteratively within a single shader which is quite a waste in case of a highly parallelized processor architecture like that of the GPU. This results in a reasonable amount of delay.

Back to the topic that geometry shaders break the synchronous nature of GPUs, I would like to talk about how the number and type of emitted primitives affect the overall performance of the rendering pipeline (not even considering the aforementioned negative factors).

The best performance can be achieved in case both the input and output primitive type of the geometry shader is the same (e.g. triangle -> triangle). Besides that, usually GPUs have an accelerated path for outputting four vertices for one vertex input (e.g. point -> triangle strip) that is useful for rendering point sprites or billboards. All the other combinations should be avoided if possible.

I hope I was clear enough to convince all of you that geometry shaders are not meant for tessellation as I really gone mad when I’ve seen that everybody was just talking about this particular use case when in fact geometry shaders are much more useful in other situations.

Tessellation on HD2000 series

The true successor of the original hardware tessellation feature reappeared with the Xbox360′s GPU and then for PC with the introduction of the AMD Radeon HD2000 series. This hardware generation came equipped with a fixed function hardware tessellator similar of that of the Radeon 8500 but with added programming flexibility. The functionality is accessible in OpenGL through the extension GL_AMD_vertex_shader_tessellator but, again, it didn’t make it its way into core OpenGL, neither into DX10 due to the lack of support on NVIDIA GPUs. The extension in fact turns the traditional vertex shader into a tessellation evaluation shader (or a domain shader in DX terminology) and even though the extension does not explicitly names it as such I will sometimes refer it this way.

Still, one important restriction has to be mentioned regarding to the presented functionality, namely that this tessellation mechanism cannot be used together with geometry shaders due to hardware limitations. My guess is that most probably the tessellator output is emitted to the same cache that is used by geometry shaders (somebody from AMD can confirm this or correct me).

The upgraded vertex shader introduced by the extension is provided with barycentric coordinates generated by the tessellator and with the control point indices (three indices in case of a triangle and four in case of a quad). The actual control point data is then fetched from within the vertex buffers used.

One important disadvantage of the tessellation architecture provided by the extension is that there is no programmable stage before the tessellator which does not allow us to do expensive per-vertex operations on the control cage (e.g. skeletal animation). Fortunately, it is very easy to overcome this limitation as on this hardware generation we already have transform feedback (stream out in DX terminology) and auto draw at our disposal. This way we can simply use an additional rendering step and an auxiliary buffer to make things working as expected.

The maximum tessellation level is 15 and there is a discrete and a continuous tessellation mode that is configurable using API calls.

While this looks already almost like the tessellation mechanism introduced by DX11, the key disadvantage is the lack of adaptive tessellation, that is the possibility to algorithmically define the tessellation level on the GPU. This makes it rather impractical for dynamic LOD based tessellation level selection as the required API calls would be batch breakers.

Still, I think this feature should have caught the attention of developers but it seems that it remained only the tool of tech demos as developers have rather waited for the appearance of DX11 that forced NVIDIA to finally implement their own hardware tessellator.

Tessellation shader

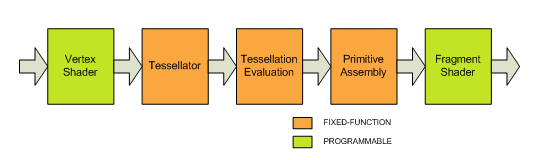

Finally, with the advent of Shader Model 5.0 GPUs we have our “official” hardware tessellation. The functionality is exposed in OpenGL via the extension GL_ARB_tessellation_shader what was introduced as part of the fourth major revision of the specification. The extension introduces two new shader types: the tessellation control shader (referred to as hull shader in DX11) and the tessellation evaluation shader (domain shader in DX terminology).

The new feature enables programmable tessellation levels up to 64 via the newly introduced tessellation control shader that allows us to process our control points in parallel yet synchronized manner.

This final revision of the feature allows us to use all the advanced techniques needed for a novel tessellation based renderer like adaptive continuous tessellation and displacement mapping. Also the vertex shader is completely separate and even though many of the vertex shader tasks have to be moved to the tessellation evaluation shader, complex operations like skeletal animation can be simply kept in the vertex shader.

Another thing is that Shader Model 5.0 hardware relaxes the limitation about the concurrent usage of tessellation and geometry shaders so one can use both to implement their algorithms that rely on geometry shader usage freely.

Still, the implementation of a tessellation evaluator shader does not really differ from that used in case of Shader Model 4.0 tessellation as we use the barycentric coordinates generated by the tessellator in the same style, just we don’t need explicit vertex fetches but the primitive data is baked for us straight from the beginning.

Crack-free rendering is still an issue however, especially in case of the programmable evaluators available in the last two versions. OpenGL 4.0 addresses this issue by introducing a precise qualified that restricts the shader compiler to use any optimizations like operation reordering or fused multiply-add that may introduce floating point round errors thus at least guaranteeing that the same sequence of operations will result in the same number. Any further steps against cracks introduced by tessellation are the responsibility of the programmer.

Conclusion

We’ve seen how various GPU generations addressed the issue of hardware tessellation as well as in what form they are available in various OpenGL implementations. I’ve also tried to collect the most relevant advantages and disadvantages of the implementations in various hardware generations.

There was also a completely separate discussion about geometry shaders and their use for geometry amplification and I hope I managed to convince everybody that it is not the way to go.

We’ve also briefly mentioned some of the major issues that may arise concerns regarding to the use of tessellation, however the thorough examination of these issues needs a much longer discussion that is out of the scope of this article, still, an interesting topic for a future one.

Unfortunately, I didn’t prepare any sample application demonstrating the usage of the various tessellation implementations due to the lack of time so this has to be also postponed to a future article.

For further reading and especially for sample applications I recommend you to check out the following links:

Hardware Tessellation on Radeon in OpenGL @ Geeks3D

First Contact with OpenGL 4.0 GPU Tessellation @ Geeks3D